Stay Confident

Subscribe to our weekly newsletter to stay confident in the AI systems you build.

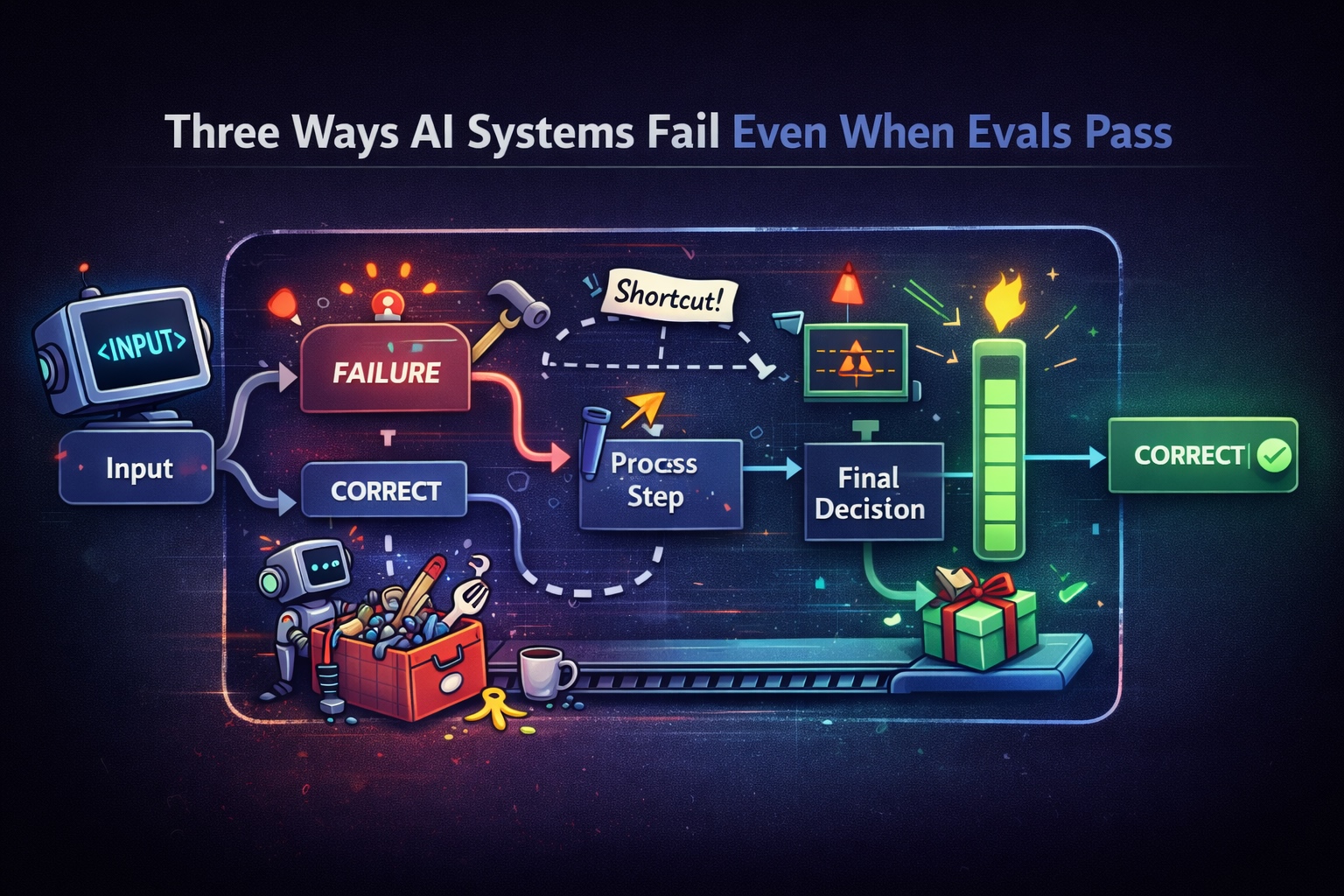

Three Ways AI Systems Fail Even When Evals Pass

AI systems can pass evals while still behaving incorrectly. This post explores three common failure modes that slip through output-based evaluation.

Your AI Agent Passed Evals. That’s the Problem.

Passing evals doesn’t mean your system works. It means your tests didn’t catch how it fails.

Multi-Turn LLM Evaluation in 2026: What You Need to Know

In this article, I'll break down multi-turn LLM evaluation — how it differs from single-turn, what metrics actually matter, and how to implement it.

The Step-By-Step Guide to MCP Evaluation

This article will teach you everything you need to evaluate MCP-based LLM applications.

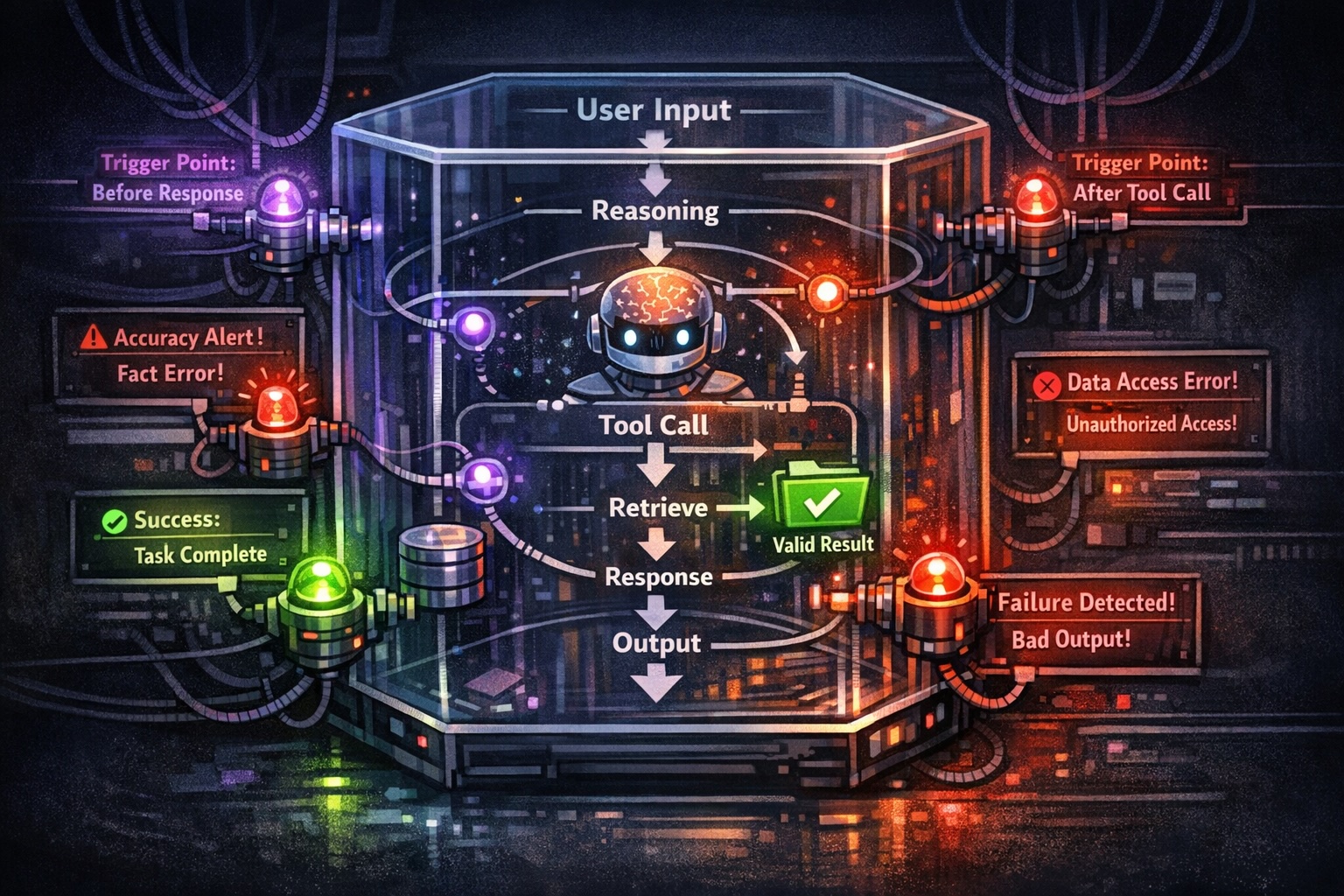

AI Agent Evaluation: Metrics, Traces, Human Review, and Workflows

A practical guide to evaluating AI agents with LLM metrics and tracing—plus when human review matters, how it calibrates judges, and workflows that combine CI, sampling, and production signals.

LLM Arena-as-a-Judge: LLM-Evals for Comparison-Based Regression Testing

In this article, you'll learn everything about running LLM Arena-as-a-judge as a novel way to regression test LLMs.

RAG Evaluation Metrics: Assessing Answer Relevancy, Faithfulness, Contextual Relevancy, And More

This article will go through everything you'll need for RAG evaluation, including metrics, and best practices.

LLM Evals Framework That Predicts ROI: A Step-by-Step Guide

Most LLM evals fail because metrics don't predict ROI, build outcome-based evals that correlate with business KPIs.

G-Eval Simply Explained: LLM-as-a-Judge for LLM Evaluation

This article goes through everything on G-Eval for anyone to easily evaluate LLM apps on any task specific criteria.

Top LLM Evaluators for Testing LLM Systems at Scale

In this article, we'll go through all the top LLM evaluators in 2025 including G-Eval and other LLM-as-a-judges.