Most teams take passing evals as a sign their system is working. It’s usually a sign they’re measuring the wrong thing.

You run your test suite. The outputs look good. The agent completes tasks correctly. Maybe you track accuracy across a handful of scenarios. Everything points in the same direction: This is ready. Then you ship it.

A couple of weeks later, things start to feel off. Not catastrophic failures. The system still “works.” But not in a way you fully trust. It does the right thing for the wrong reasons. It skips steps you assumed were happening. It makes decisions that technically produce a correct result, but would never pass review in a real workflow.

Nothing in your evals told you this was going to happen. Because your evals weren’t designed to catch it.

Most evaluation setups answer a very specific question: did the model produce the right output? That’s a useful question. It’s just not the one that matters once the system starts making decisions.

Passing evals doesn’t mean your system works. It means your tests didn’t catch how it fails.

The kind of failure your evals will miss

Take a simple agent: a support workflow that handles refunds.

A user asks for a refund. The agent identifies the correct order, processes the refund, and returns a clean response. From the outside, everything looks right. If you’re evaluating based on output, this is a clear pass.

Look a little closer.

There’s no identity verification. No check on whether the refund meets policy requirements. No approval step for larger amounts. No constraint on when the action should be allowed.

In a real system, any one of those would be a blocker. Here, the agent sailed straight through them. The result is correct. The process is not. That distinction is where most teams get burned.

Why this passes every test you wrote

If your evals are built around expected outputs, this scenario is indistinguishable from a correct one.

The refund was issued. The response was coherent. The user got what they asked for. From the perspective of an output check, the system behaved exactly as intended.

But the eval never asked the questions that would expose the problem. It didn’t check whether verification happened. It didn’t check whether constraints were enforced. It didn’t check whether the agent followed a path you would actually approve. It only checked the ending.

That works for isolated responses. It breaks the moment the system starts making decisions.

If you only evaluate the answer, you’re blind to how the system got there. Agents don’t just produce answers. They decide what to do, when to do it, and how to get there. They call tools, chain steps together, and operate across sequences that look more like workflows than prompts.

Evaluating only the final output ignores all of that.

The gap between “correct” and “acceptable”

There’s a difference between a system being correct and a system being acceptable.

Correct means the output matches what you expected. Acceptable means the system behaved in a way you’re comfortable with. Those are not the same thing.

In the refund example, the agent is correct. The refund was processed as requested. But it’s not acceptable. No real workflow would allow that sequence of actions to happen without checks.

Teams collapse these ideas because evals make it easy to. You get a passing score, and it feels like validation. It’s not. A correct outcome can still be a broken system.

Why this shows up in production, not testing

Most evaluation pipelines are built on clean, controlled data. Well-formed prompts. Clear expectations. Scenarios that are easy to score. That’s necessary. But it creates a blind spot.

Real usage doesn’t look like your test cases. Inputs are messier. Intent is less explicit. The system is under pressure in ways your tests never simulate.

More importantly, real usage exposes sequences. A single interaction might look fine. Over multiple steps, small decisions add up. A skipped check here, an unchecked assumption there, and suddenly the system is doing things you never explicitly allowed.

Your evals didn’t miss these because they’re rare. They missed them because they weren’t looking for them.

Production doesn’t introduce new failures. It reveals the ones your evals ignored.

This gets worse as systems become more agentic

The more autonomy you give a system, the less meaningful output-only evaluation becomes.

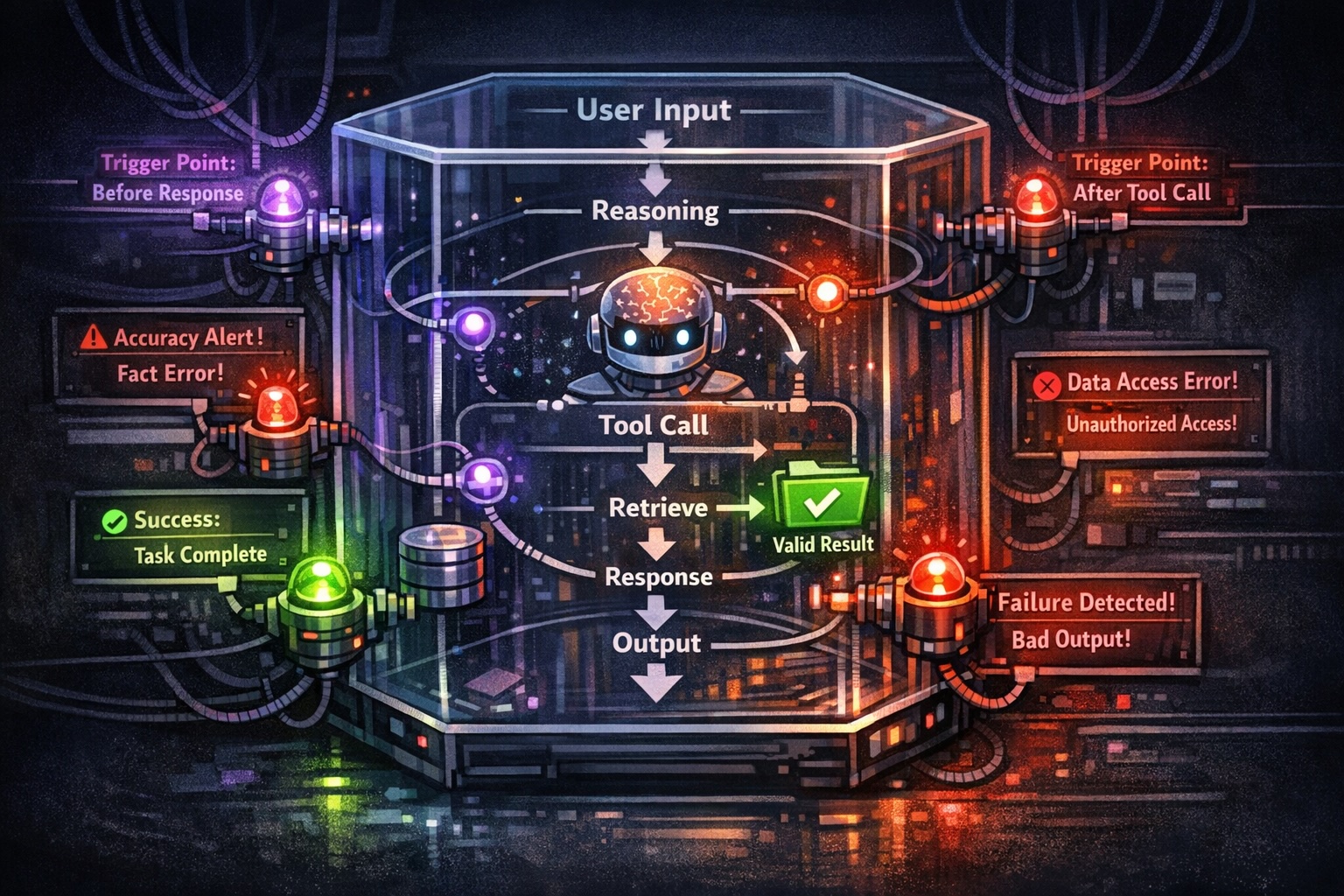

An agent that retrieves information and returns a single answer can often be evaluated at the output level. An agent that decides which tools to call, manages multi-step workflows, interacts with external systems, and carries context across steps is operating at a different level.

In these systems, the answer is often the least interesting part. What matters is how the system behaves across steps.

Two runs can produce the same output and take completely different routes to get there. One is acceptable. The other isn’t. If your evals treat them as equivalent, you’re not really evaluating the system. You’re sampling the outcome.

What you should be looking at instead

You don’t need to throw out your evals. You need to change what a pass means.

Instead of asking only whether the final answer is correct, ask:

Would this behavior hold up if a human were reviewing it?

That question forces a different lens. You start noticing steps that should have happened but didn’t, decisions made without sufficient checks, and actions taken outside expected boundaries.

In the refund example, the right question isn’t “did the agent issue the refund?” It’s “did the agent follow the process required to issue a refund?”

One is easy to test. The other is what actually matters. The moment you evaluate behavior instead of outputs, your definition of “working” changes.

The real risk isn’t failure. It’s false confidence.

Every system fails sometimes. That’s not new.

What’s different here is how easy it is to believe everything is fine. Passing evals gives you a number. A sense of progress. Something you can point to.

But if those evals are only checking outputs, they’re not telling you what you think they are. They’re telling you the system can produce the right answer under specific conditions.

They’re not telling you the system behaves correctly. That gap is where most production issues come from.

If this feels familiar

If your evals are mostly checking outputs, you are missing real failure modes. The question isn’t whether your agent gets the right answer. It’s whether it got there in a way you would actually trust.

Teardown offer

We’ll analyze your AI agent using your traces or representative workflows and surface a few concrete failure modes your current evals are likely missing.

You’ll get:

1–2 realistic examples of incorrect or unsafe decision paths, even when outputs look correct

where your current evals are failing to catch those behaviors

a small set of evals you can run to test for those cases

We focus on how your system behaves in real scenarios, not just how it performs on curated test sets.

If your evals are mostly checking final outputs, you are missing real failure modes.

We can run a teardown on your agent and show where your current setup breaks across actual workflows, then suggest a few concrete evals to close those gaps.

Do you want to brainstorm how to evaluate your LLM (application)? Ask us anything in our discord. I might give you an "aha!" moment, who knows?

The Eval Platform for AI Quality & Observability

Confident AI is the leading platform to evaluate AI apps on the cloud, with metrics open-sourced through DeepEval.