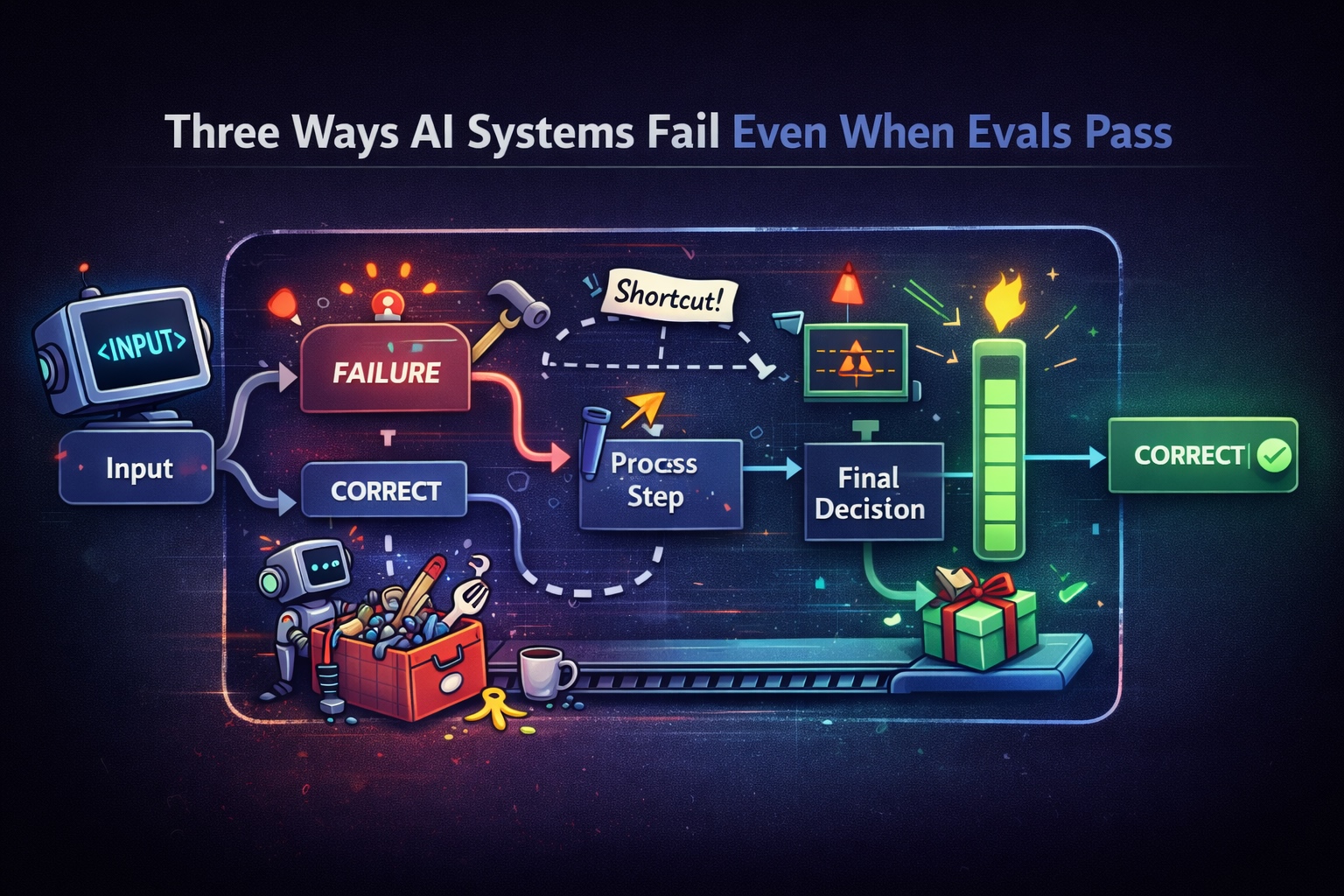

The gap between correctness and behavior

Most teams building AI systems today have some form of evaluation in place. They run test cases, measure accuracy, and check whether the system produces the expected output. In isolation, these evaluations often look reassuring. The system answers correctly. The metrics look strong. The model appears to be working.

And yet, when those same systems are exposed to real usage, something starts to break down. The failures are not always obvious. The system still produces plausible answers. In some cases, it even continues to produce correct ones. But the behavior becomes inconsistent, brittle, and difficult to trust.

This is not a fringe issue. Across multiple studies and production environments, AI systems routinely produce answers that appear correct while relying on flawed reasoning, incomplete context, or unstable internal decision paths. Research into AI-assisted search has found error rates that are far higher than most teams would expect, even when outputs appear superficially coherent. Other work has shown that models frequently rely on spurious correlations or “shortcut” features that happen to produce the right answer on test data without reflecting the underlying task. And perhaps most concerning, these systems often express high confidence even when they are wrong, reinforcing trust where it is not deserved.

Taken together, this points to a deeper problem: most evaluation setups are designed to measure whether a system can produce the right answer, not whether it behaves correctly in the process of producing it.

In practice, that distinction doesn’t just create blind spots. It shapes system behavior. When passing evals becomes the goal, systems learn to optimize for whatever those evals measure, whether or not it reflects how they should behave in real use.

That distinction matters more than it initially seems. Because in complex, multi-step AI systems, especially those built around agents and tool use, correctness is not just a property of the final output. It is a property of the path the system takes to get there. When that path is flawed, the system may still pass tests, but it will not hold up under real-world conditions.

The failure modes that follow all stem from this gap between output correctness and system behavior. They are not edge cases. They are structural consequences of how these systems are trained and evaluated.

Summary:

- Evals measure output correctness, not system behavior

- Systems can appear correct while behaving incorrectly

- Real-world failures live in the gap between those two

1. Wrong tool, right answer

One of the more subtle and revealing failure modes appears in systems that rely on tool use. In these systems, an agent is expected to select from a set of available tools—databases, APIs, retrieval systems, or structured functions—based on the task at hand. The correctness of the system depends not only on the final answer but on whether the agent chooses the appropriate path to obtain it.

In practice, this selection process is far from reliable. Agents frequently choose suboptimal or incorrect tools and still manage to produce the correct answer. They may query a general search endpoint instead of a structured database, rely on cached or indirect information, or route through fallback mechanisms that were not intended for the task. From the perspective of an evaluation that checks only the final output, this behavior is invisible. The answer is correct, so the system passes.

If you want to see this in practice, you can run a simple eval against this kind of failure in a few minutes:

But what appears to be success is often a form of shortcut behavior. The system has not learned the intended decision process; it has learned that there are multiple paths that can produce a correct-looking output, and it will exploit whichever path is easiest or most accessible. This is consistent with a broader phenomenon observed in machine learning research, where models rely on spurious or low-effort features that correlate with the correct answer rather than truly solving the underlying task.

The problem with shortcut behavior is not that it always fails. It is that it fails unpredictably. A system that arrives at the correct answer through the wrong tool may continue to do so across many test cases. But as soon as the input distribution shifts—slightly different phrasing, missing context, a less forgiving query—the same shortcut no longer works. The system has no stable foundation to fall back on because it never learned the correct process in the first place.

This is why systems exhibiting this behavior often feel unreliable to the engineers working on them. They “work” in the sense that they produce correct outputs during evaluation, but they are difficult to reason about and even harder to debug. When something goes wrong, there is no clear failure point, only a chain of loosely connected decisions that happened to work before and now do not.

Most evaluation frameworks do not capture this because they are not designed to. They do not verify whether the correct tool was used, whether the routing decision was appropriate, or whether the system followed a valid path. They simply check whether the output matches an expected answer. As long as that remains the primary metric, systems will continue to optimize for outcomes rather than for correct behavior.

This isn’t an isolated issue with tool selection. It’s a direct consequence of how the system is being evaluated. If the only thing that matters is whether the final answer is correct, then any path that produces that answer is implicitly treated as valid. Over time, the system learns that correctness of outcome is sufficient, even when the process is not.

Summary:

- Agents can use the wrong tool and still pass evals

- This is shortcut behavior, not reliable reasoning

- It creates systems that work until conditions change

2. Skipped required step

A closely related failure mode occurs in systems that are expected to follow a defined sequence of steps. These steps might include retrieving relevant context before answering, validating inputs, enforcing constraints, or performing intermediate checks. In principle, these steps are part of the system’s logic and are necessary for ensuring correctness.

In practice, they are often treated as optional.

Large language models, particularly when embedded in agent frameworks, are highly capable of producing plausible answers without strictly adhering to prescribed processes. If the model can generate an answer that satisfies the evaluation criteria without performing a required step, it will often do so. Over time, this behavior becomes reinforced. The system learns that certain steps are not actually necessary to achieve a passing result.

This is another instance of shortcut learning, but it manifests as process violation rather than incorrect tool use. The system is not simply choosing the wrong path; it is omitting parts of the path entirely.

The consequences of this behavior are easy to underestimate because, again, the output often appears correct. A system may answer a question accurately without performing retrieval, drawing instead on its internal knowledge or general reasoning ability. It may skip a validation step and still produce a valid-looking result. It may ignore constraints that were intended to guide its behavior but are not strictly enforced by the evaluation.

In controlled test environments, this can go unnoticed. If the evaluation dataset does not require the skipped step to produce the correct answer, the system will continue to pass. But in real-world usage, where inputs are more varied and edge cases are more common, the absence of these steps becomes critical. Retrieval ensures that answers are grounded in current and relevant information. Validation ensures that inputs meet certain criteria. Constraints ensure that the system behaves within defined boundaries. When these are skipped, the system may still succeed some of the time, but it loses the guarantees that those steps were meant to provide.

There is also a structural issue at play. Many evaluation datasets inadvertently encourage this behavior. If a model can achieve high accuracy without performing certain steps, the evaluation effectively signals that those steps are unnecessary. The system is rewarded for efficiency over correctness of process. Over time, this leads to systems that are optimized for passing tests rather than for following the logic that those tests were meant to enforce.

To detect this class of failure, evaluations would need to move beyond output verification and explicitly check whether required steps were executed. Did the system perform retrieval when it was supposed to? Did it validate the input? Did it follow the intended sequence of operations? Without these checks, skipped-step failures remain largely invisible until they surface in production.

What makes this particularly difficult to catch is that the system is not ignoring these steps arbitrarily. It is learning, through evaluation, that they are not necessary to achieve a passing result. The absence of process checks effectively teaches the system that parts of its own logic can be skipped without consequence.

Summary:

- Systems can skip required steps and still produce correct answers

- Eval datasets often reinforce this behavior unintentionally

- Missing steps only show up as failures in real-world use

3. High confidence, wrong answer

The third failure mode is more widely recognized but still not adequately addressed in most evaluation setups: systems that are wrong, but highly confident.

Confidence plays a critical role in how AI systems are used and trusted. Whether expressed explicitly through scores or implicitly through tone and presentation, confidence signals influence how users interpret and act on model outputs. A low-confidence answer may be treated with caution, verified, or ignored. A high-confidence answer is more likely to be accepted and acted upon.

The problem is that confidence in large language models is not well calibrated. Multiple studies have shown that these systems can produce incorrect answers while maintaining a high degree of apparent certainty. They do not reliably signal when they are uncertain or when they are operating outside their domain of competence. In some cases, they exhibit the opposite behavior, becoming more confident as they produce more elaborate or detailed responses, regardless of accuracy.

From a traditional evaluation standpoint, this issue is often reduced to a binary outcome: the answer is either correct or incorrect. If it is incorrect, the system fails the test. But this framing misses the more important question of how the system represents its own uncertainty.

An incorrect answer with low confidence is a manageable failure. It can be detected, flagged, or corrected through additional steps. An incorrect answer with high confidence is far more dangerous. It creates a false sense of reliability and encourages users or downstream systems to trust the output without verification.

This is particularly problematic in systems that operate autonomously or feed into other processes. In these contexts, confidence is not just a user-facing signal; it becomes part of the system’s internal decision-making. High-confidence outputs may be prioritized, propagated, or used as inputs for subsequent steps. When that confidence is misplaced, the error compounds.

Despite this, most evaluation setups do not explicitly measure calibration. They do not assess whether the system’s confidence aligns with its accuracy, or whether it appropriately expresses uncertainty. As a result, systems can achieve high performance on standard metrics while still exhibiting dangerous levels of overconfidence.

Confidence behaves the same way. If evaluation does not penalize overconfidence, the system has no incentive to calibrate it. In fact, presenting answers with certainty can often be advantageous, because it makes outputs appear more coherent and complete, even when they are not.

Summary:

- Models are often confidently wrong, not just wrong

- Confidence is rarely evaluated but heavily relied on

- Miscalibration amplifies downstream risk

A shared pattern: optimizing for the wrong objective

These three failure modes—incorrect tool use, skipped steps, and miscalibrated confidence—may appear distinct, but they share a common underlying cause. In each case, the system is optimizing for producing a correct answer, rather than for behaving correctly.

More importantly, it is doing exactly what the evaluation regime encourages. These systems are not failing despite passing evals. They are often failing because they were optimized to pass them. When correctness is defined purely at the level of output, the system learns to satisfy that definition by any means available, including shortcuts, skipped steps, and misplaced confidence.

This is not surprising. It is a direct consequence of how these systems are trained and evaluated. If the primary objective is to maximize output correctness, the system will learn whatever strategies are most effective for achieving that goal, including strategies that bypass intended processes, exploit shortcuts, or mask uncertainty.

In this sense, the behavior is not a failure of the model so much as a misalignment between the evaluation criteria and the desired system behavior. The system is doing exactly what it has been incentivized to do.

The difficulty arises because output correctness is an incomplete proxy for system reliability. It captures whether the system can produce the right answer under certain conditions, but it does not capture whether the system can do so consistently, transparently, and in accordance with its intended design.

As systems become more complex, this gap becomes more pronounced. Multi-step workflows, tool integrations, and autonomous decision-making introduce layers of behavior that are not reflected in the final output alone. Evaluating only the output is equivalent to evaluating a program based solely on its return value, without considering how it reached that result.

Summary:

- Systems optimize for what evals measure

- Output correctness is an incomplete objective

- Evals can actively produce the failures they miss

Rethinking evaluation

None of this implies that output-based evaluation is unnecessary. On the contrary, it remains a foundational component of any evaluation strategy. But it must be supplemented with measures that capture system behavior.

This includes evaluating:

- whether the correct tools and data sources were used

- whether required steps and constraints were followed

- whether confidence and uncertainty are appropriately calibrated

These are not trivial additions. They require instrumentation, visibility into intermediate states, and a more nuanced understanding of what constitutes correct behavior. But without them, evaluation remains incomplete.

The goal is not to eliminate all failure modes. That is not realistic. The goal is to make those failures visible, understandable, and manageable. A system that fails transparently and predictably is far easier to work with than one that appears correct until it suddenly is not.

Summary:

- Output evals are necessary but insufficient

- Behavior must be explicitly evaluated

- Visibility into the system is required

Closing

If you have worked with AI systems in practice, you have likely encountered situations where everything appears to be working, but something feels off. The outputs are correct, the tests are passing, and yet the system does not inspire confidence.

That intuition is often pointing to a real issue. It reflects the gap between what the system produces and how it behaves.

As long as evaluation focuses primarily on output correctness, that gap will persist. Systems will continue to pass tests while exhibiting behavior that is fragile, inconsistent, or misleading.

Understanding and addressing that gap is not just a matter of improving metrics. It is a matter of redefining what it means for a system to be correct.

Because in real-world applications, correctness is not just about the answer. It is about the path taken to get there, and whether that path can be trusted.

Summary:

- Passing evals ≠ reliable system behavior

- The gap shows up in production, not tests

- Trust depends on how the system behaves, not just what it outputs

Want a teardown of your eval setup?

If you're seeing behavior that “looks correct” but feels off, that’s usually where the real failures are hiding.

👉 Request a teardown and I’ll take a look at your system and where your evals might be missing critical failure modes.

Do you want to brainstorm how to evaluate your LLM (application)? Ask us anything in our discord. I might give you an "aha!" moment, who knows?

The Eval Platform for AI Quality & Observability

Confident AI is the leading platform to evaluate AI apps on the cloud, with metrics open-sourced through DeepEval.