AI Connections let you run evaluations directly on the platform by connecting to your AI app via an HTTPS endpoint. Instead of writing code, you can trigger evaluations with a click of a button—Confident AI will call your endpoint with data from your goldens and parse the response.

To create an AI connection:

Your AI connection won’t be usable yet—you still need to configure the endpoint, payload, and at minimum the actual output key path.

There are several parameters you’ll need to configure in order for your AI connection to work.

Give your AI connection a unique name to identify it within your project.

Your AI app must be accessible via an HTTPS endpoint that accepts POST requests and returns any response containing the actual output of your AI app.

You can choose between HTTP Response, HTTP Streaming, and SSE Streaming modes.

The default mode is HTTP Response.

If your endpoint returns a custom response format like JSON or any other formats, you can configure the actual output key path or use a transformer to extract the actual output from the response. See Actual Output Key Path and Transformers for more details.

For SSE Streaming and HTTP Streaming modes, your endpoints can return either strings, JSON objects, or custom formats that can be processed using transformers for each chunks.

For string chunks, Confident AI collects all chunks and returns a single string.

Your model generates:

Confident AI response:

Configure the payload that gets sent to your endpoint when Confident AI calls it. JSON mode lets you map available variables into a JSON structure, while the Code editor lets you write a Python function for conditional logic, data transformation, or full programmatic control over the request body.

JSON mode lets you define a payload using available variables. You can nest values to match your endpoint’s expected structure.

Available variables:

Use golden.* variables for single-turn evaluations and conversationalGolden.* variables for multi-turn evaluations. See Prompts for details on how to use the prompts dictionary, and Hyperparameters for passing hyperparameters to your endpoint.

Example payload:

The custom payload feature lets you structure the request to match your existing API contract—no need to modify your AI app to accept a specific format.

Add any custom headers required by your endpoint as key-value pairs, such as API keys or content type specifications. These headers are sent with every request to your AI app.

Configure authentication for requests to your AI app endpoint. The Authorization tab has two sections: Secrets Manager and Authentication.

A secrets manager lets you securely retrieve authentication credentials at runtime from a cloud vault, instead of storing them directly on the platform.

To enable a secrets manager:

https://your-vault.vault.azure.net)For self-hosted deployments, the secrets manager is always enabled and uses managed identities for authentication, so no secrets provider credentials are required.

Select an authentication type from the dropdown:

Auth0 requires the following fields:

HMAC requires the following fields:

You can use a secrets manager with Auth0 to store your client credentials in a key vault. Instead of entering the actual Client ID and Client Secret, provide the names of the secrets in your vault and they will be retrieved at runtime.

Associate prompt versions with your AI connection. When running evaluations, these prompts will be attributed to each test run, letting you trace results back to the prompts used.

The prompts variable in your payload is a dictionary where each key maps to an object containing alias and version:

Here’s an example of how your Python endpoint might handle the prompts dictionary:

For more details on working with prompts, see Prompt Versioning.

Define optional hyperparameters as string key-value pairs. These are sent to your endpoint as part of the payload and are also logged in test runs and experiments, making it easy to track which configuration was used for each evaluation.

Hyperparameters are useful for passing model configuration values like temperature, model_name, or max_tokens to your AI app without hardcoding them into your endpoint. Since they’re logged alongside test run and experiment results, you can compare how different hyperparameter values affect evaluation outcomes.

The hyperparameters variable in your payload is a dictionary where both keys and values are strings:

Here’s an example of how your Python endpoint might use hyperparameters:

Hyperparameter values are always strings. Cast them to the appropriate type

(e.g., float, int) in your endpoint as needed.

Transformers are a powerful way to apply custom logic to the actual output of your AI app. You can use transformers to extract the actual output from a custom response format using custom Python code.

You can add your own transformers by navigating to Project Settings → Transformers and clicking Create Transformer.

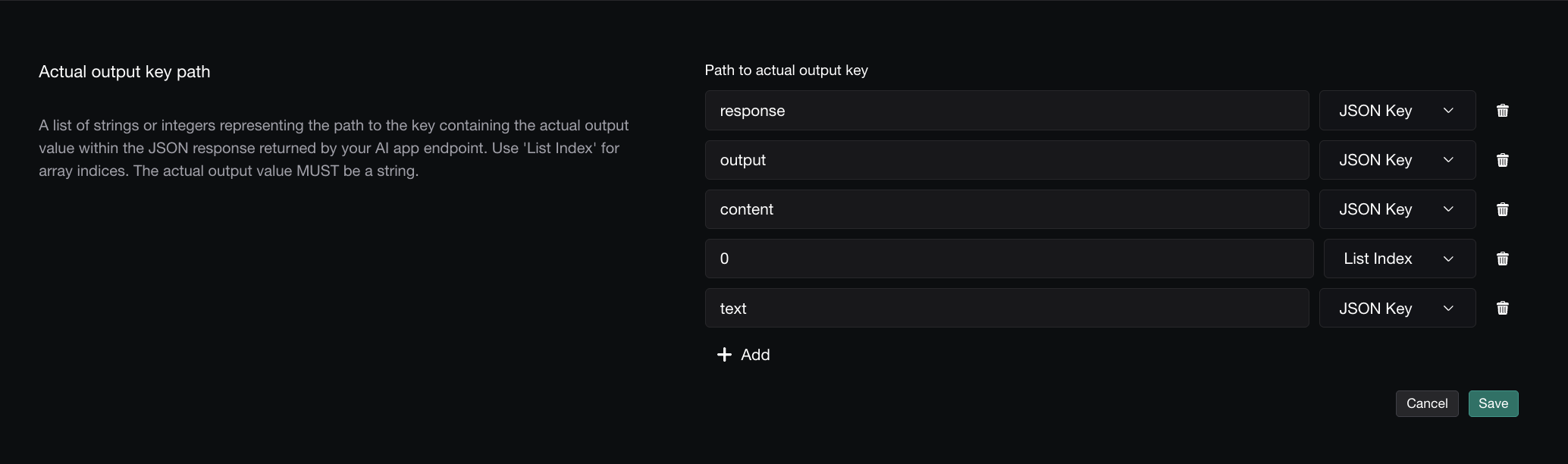

A list of strings or integers representing the path to the actual_output value in your JSON response. Use strings for JSON keys and integers for array indices. This is required for evaluation to work.

For example, if your endpoint returns:

Set the key path to ["response", "output"].

For nested arrays, use integers to specify the array index. For example, if your endpoint returns:

Set the key path to ["response", "output", "content", 0, "text"].

A list of strings or integers representing the path to the retrieval_context value in your JSON response. Use strings for JSON keys and integers for array indices. This is optional and only needed if you’re using RAG metrics. The value must be a list of strings.

For example, if your endpoint returns:

Set the key path to ["response", "retrieval_context"].

A list of strings or integers representing the path to the tools_called value in your JSON response. Use strings for JSON keys and integers for array indices. This is optional and only needed if you’re using metrics that require a tool call parameter. The value must be a list of ToolCall.

For example, if your endpoint returns:

Set the key path to ["response", "tools_called"].

For more information on the structure of a tool call, refer to the official DeepEval documentation.

Set the maximum time (in seconds) that Confident AI will wait for your endpoint to respond before timing out. This helps prevent evaluations from hanging indefinitely if your AI connection is slow or unresponsive.

If your AI app performs complex operations or calls external services, you may need to increase the timeout to avoid premature failures.

Set the maximum number of concurrent requests that Confident AI will send to your endpoint at the same time. This helps prevent overwhelming your AI app during large evaluation runs.

Set the maximum number of times Confident AI will retry a failed request to your endpoint. This helps handle transient errors without failing the entire evaluation.

During multi-turn simulations, Confident AI calls your endpoint once per turn. You can use state to persist information—like a thread ID or session—across turns so your AI app can maintain context throughout the conversation.

On the first turn, the state variable in your payload will be empty since no prior state exists. If your endpoint returns a state object and the state key path successfully extracts it, that state will be included in the state payload variable from the second turn onwards.

To enable multiturn state, include state in your payload configuration so it gets sent to your endpoint on each turn:

Here’s an example of how your endpoint might handle state:

The state key path works just like the actual output key path—a list of strings or integers representing the path to the state object in your JSON response. This tells Confident AI where to extract state from your endpoint’s response so it can be passed back on the next turn.

For example, if your endpoint returns:

Set the state key path to ["state"].

State is only relevant for multi-turn evaluations (simulations). For single-turn evaluations, you can ignore this setting entirely.

For single-turn evaluations, you can link each test case to its corresponding trace for full observability. This is done by including testCaseId in your payload (enabled by default) and passing it to your tracing setup.

Include testCaseId in your payload configuration and ensure your AI connection is configured to accept it.

Then, pass the testCaseId to your tracing implementation:

Once linked, you can view the full trace for each test case directly from the evaluation results, making it easy to debug failures and understand model behavior.

For multi-turn evaluations, Confident AI calls your endpoint once per turn. Each turn has its own turnId that you can pass to your tracing setup. This links each turn’s trace to the specific turn in the conversation, letting you view traces per-turn from the evaluation results.

Include turnId in your payload configuration:

Then, pass the turnId to your tracing implementation:

With turnId linked, you can click “Open trace” on any individual turn in the

evaluation results to see the full trace for that specific turn.

For multi-turn red-team attack methods (e.g. Linear Jailbreaking, Crescendo Jailbreaking, Tree Jailbreaking, Sequential Jailbreak, Bad Likert Judge), Confident AI calls your endpoint once per turn — every call produces its own trace. To group all of those turn-traces into a single conversation in the assessment view, the platform sends a threadId in the payload. Stamp it onto your traces and the assessment side drawer will open them as one thread.

threadId is only sent for multi-turn red-team attacks. Single-turn attacks

and standard evaluations don’t include it, so your endpoint can safely treat

it as optional.

Include threadId in your payload configuration:

Then, pass the threadId to your tracing implementation:

Once linked, the assessment side drawer for a multi-turn test case on risk assessment will show a link to your thread.

After configuring your AI connection, click Ping Endpoint to verify everything is set up correctly. You should receive a 200 status response. If not, check the error message and adjust your configuration accordingly.