TGIF! Thank god it’s features, here’s what we shipped this week:

Queues now know who to call, dashboards picked up every chart shape known to humankind, and traces went multimodal. Plus a stack of reliability fixes quietly landed underneath.

TGIF! Thank god it’s features, here’s what we shipped this week:

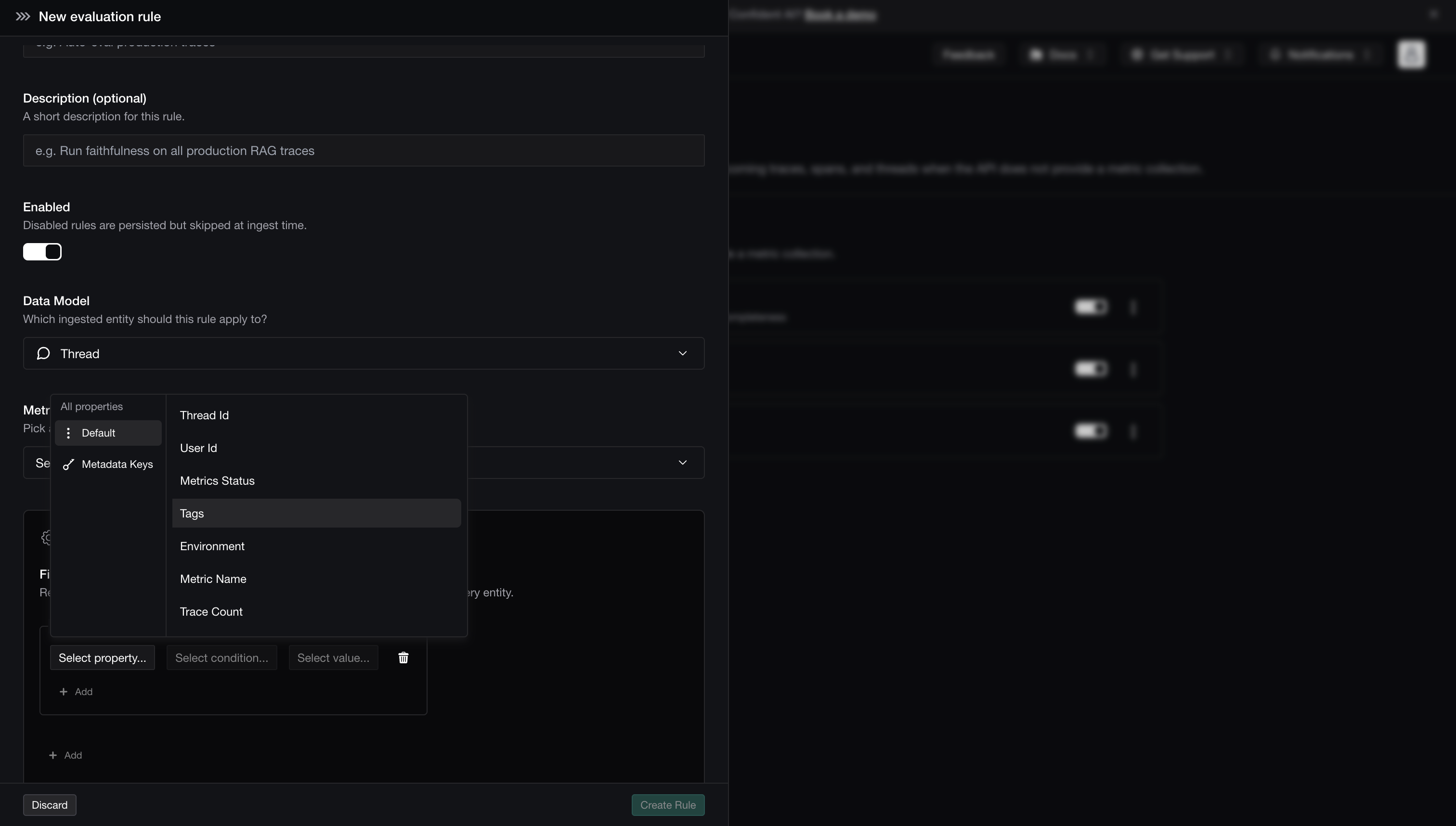

This week is about doing less. Online evals run themselves on rules you define in the UI, signals auto-classify into the issues actually showing up, and dataset reruns remember exactly how you set them up last time. Less wiring, more shipping.

TGIF! Thank god it’s features, here’s what we shipped this week:

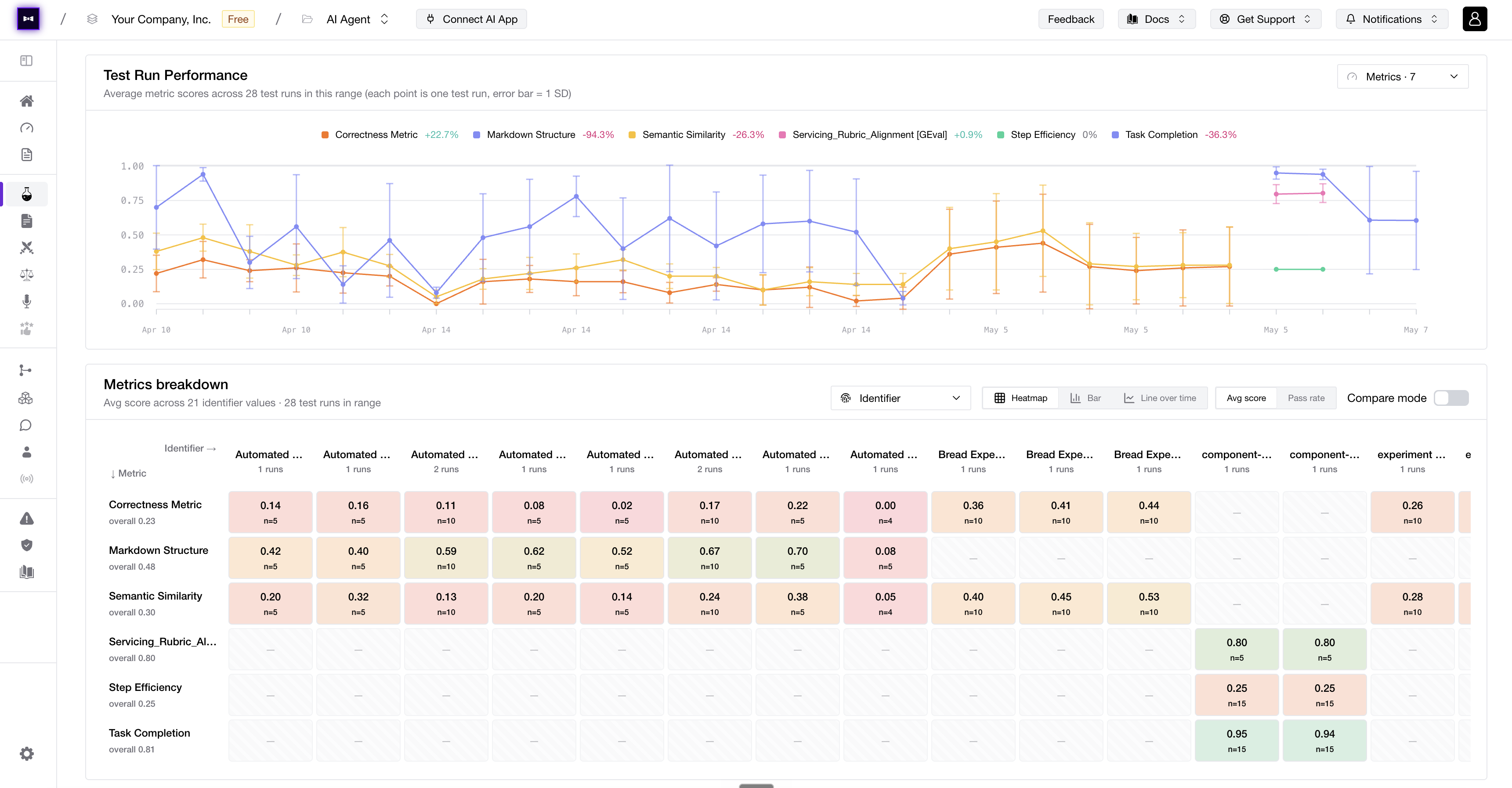

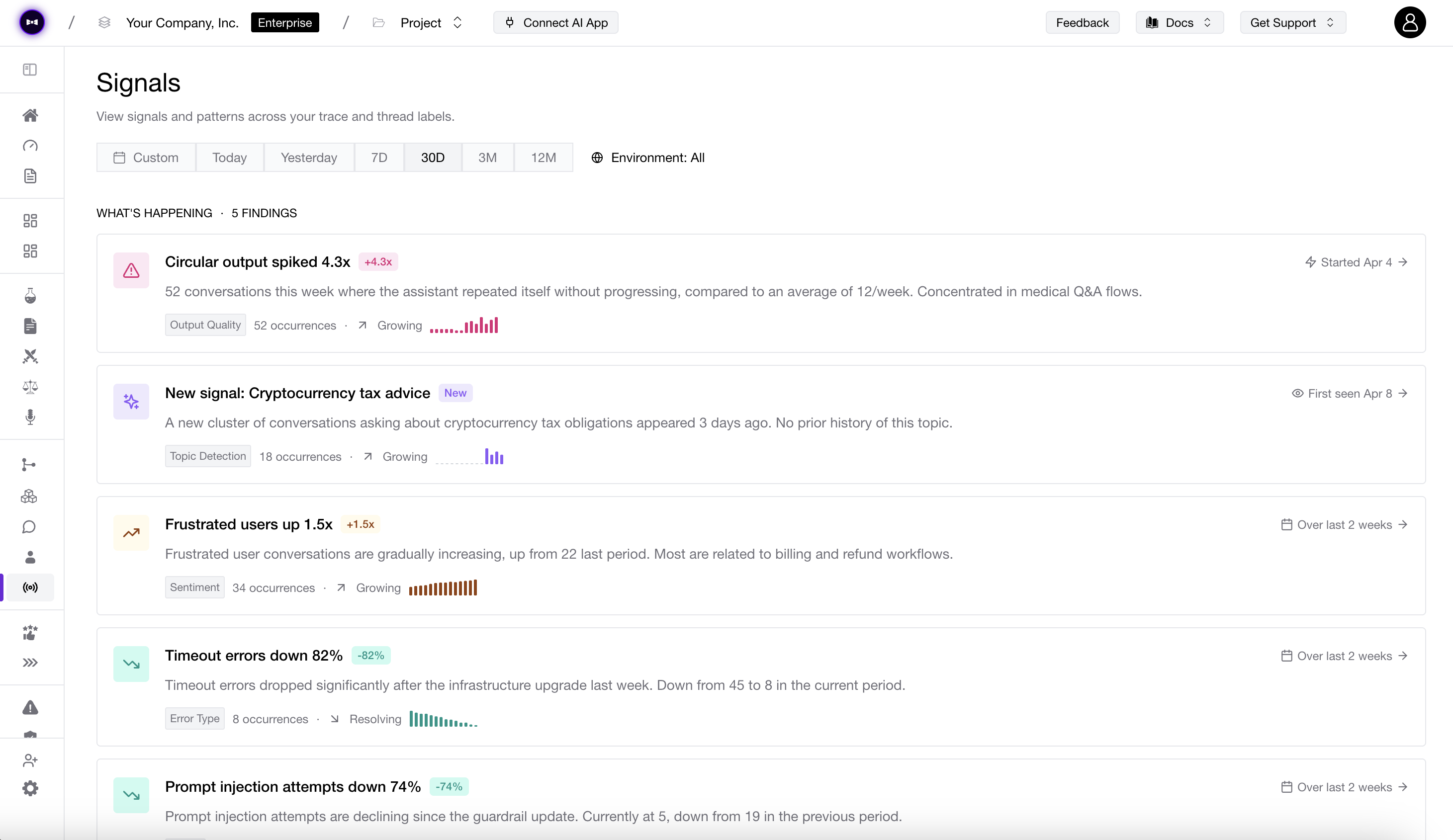

Welcome to Reliability Week. The plot has thickened—literally. Test Runs got a full analytics layer with heatmaps, bar graphs, and line-over-time charts that slice by any dimension you want (datasets, identifiers, hyperparams, models, prompts), so you can finally watch the trend instead of squinting at one run at a time. Offline Classification lets you classify traces and threads after the fact, and reclassify to backfill labels on data that came in before your rules existed. Auto-Surfaced Signals flips the question on its head—instead of you asking the data what’s wrong, the platform tells you. Multi-Turn Evals leveled up across the board with variable interpolation, streaming prompts, and AI Connections support. And the views you actually live in—regression testing, thread displayer, test cases, Observatory tables—got a wave of polish.

TGIF! Thank god it’s features, here’s what we shipped this week:

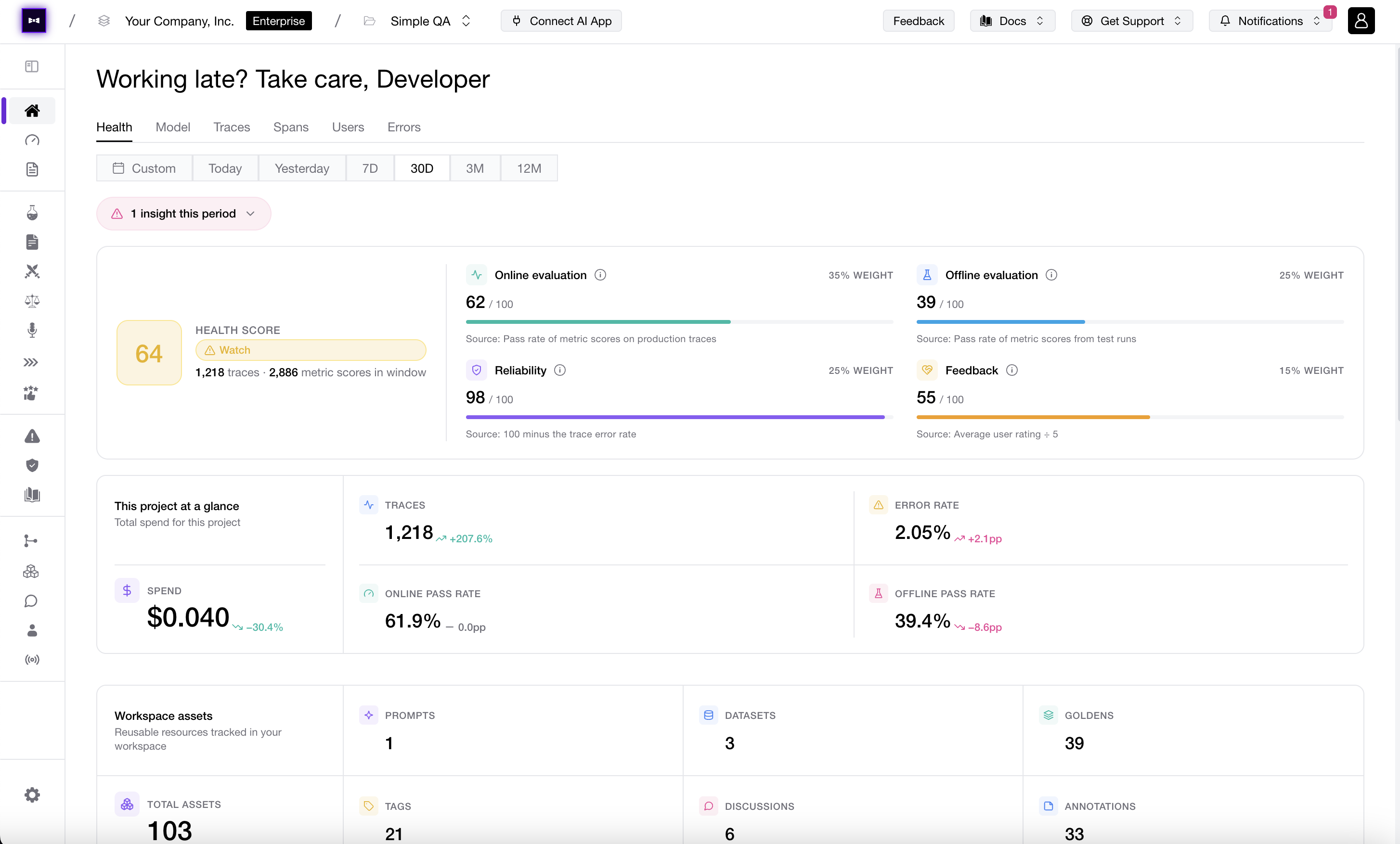

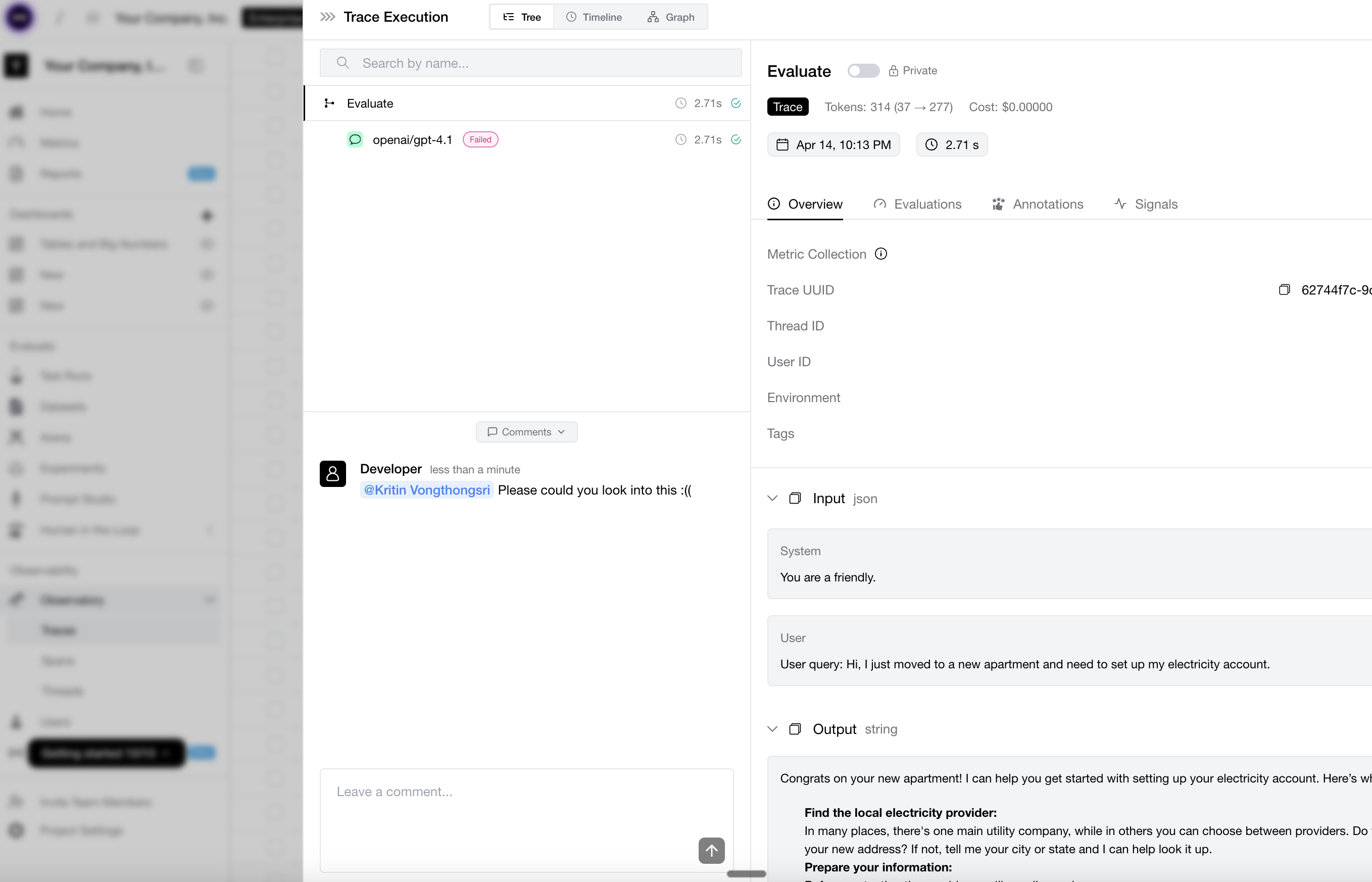

This week is about knowing when things are healthy, knowing exactly how risky they are, and knowing your API keys cannot accidentally do too much damage. Health Dashboards give you a live pulse on evals, error rates, cost, and the signals that tell you whether your AI system is chilling or quietly catching fire. Comment Notifications keep the collaboration loop moving when someone tags you on the thing that needs attention. Customizable risk assessments, attack methods, and vulnerabilities let you shape red teaming around the threats your app actually cares about. And on the platform side, API keys and model credentials got a serious security glow-up: read-only keys, cleaner credential flows, org/project scoping, and suffixes that make keys easier to recognize before someone pastes the wrong secret into the wrong place. Prevention: still less annoying than incident response.

Next week is Reliability Week. Bring a helmet.

TGIF! Thank god it’s features, here’s what we shipped this week:

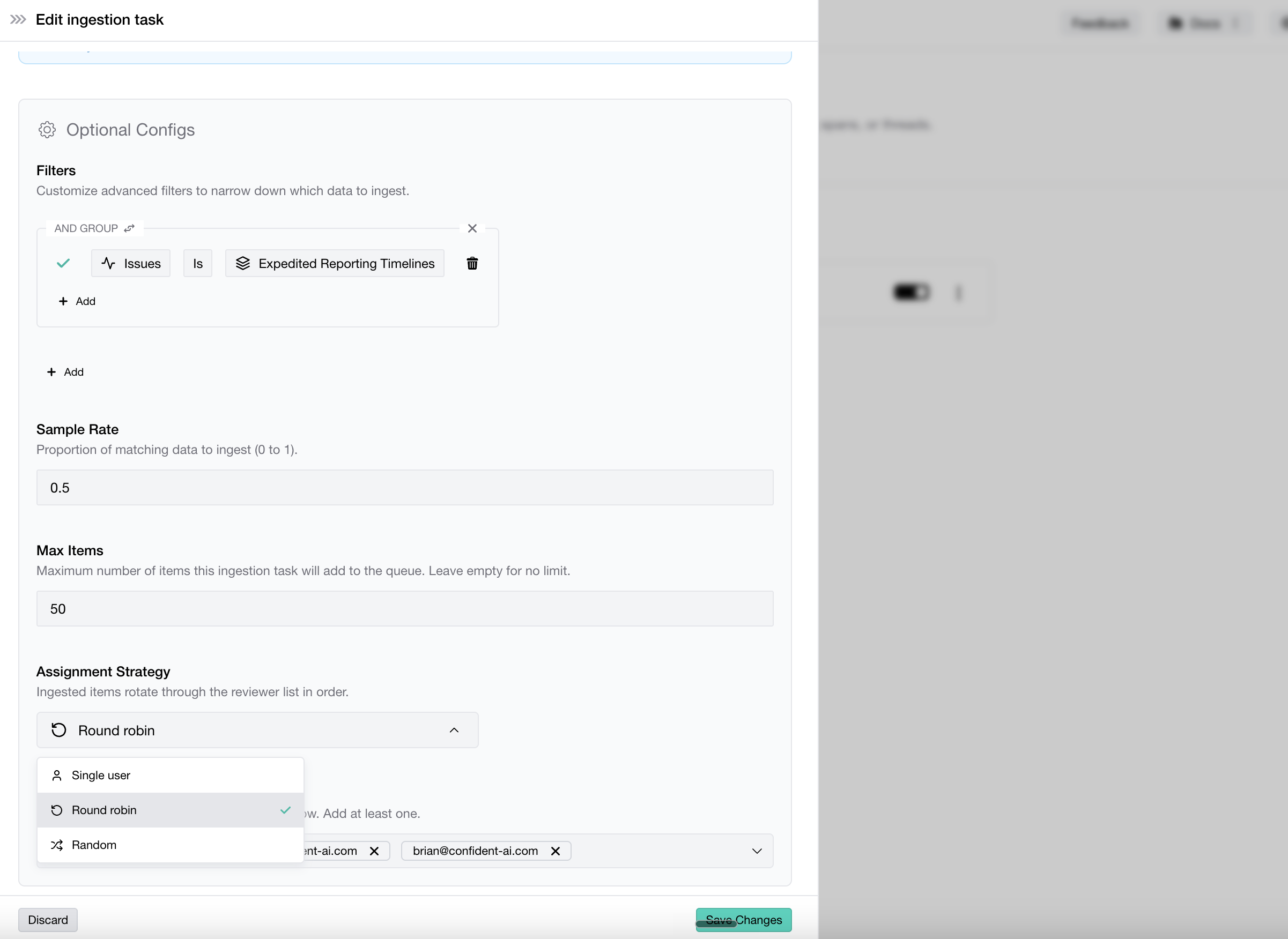

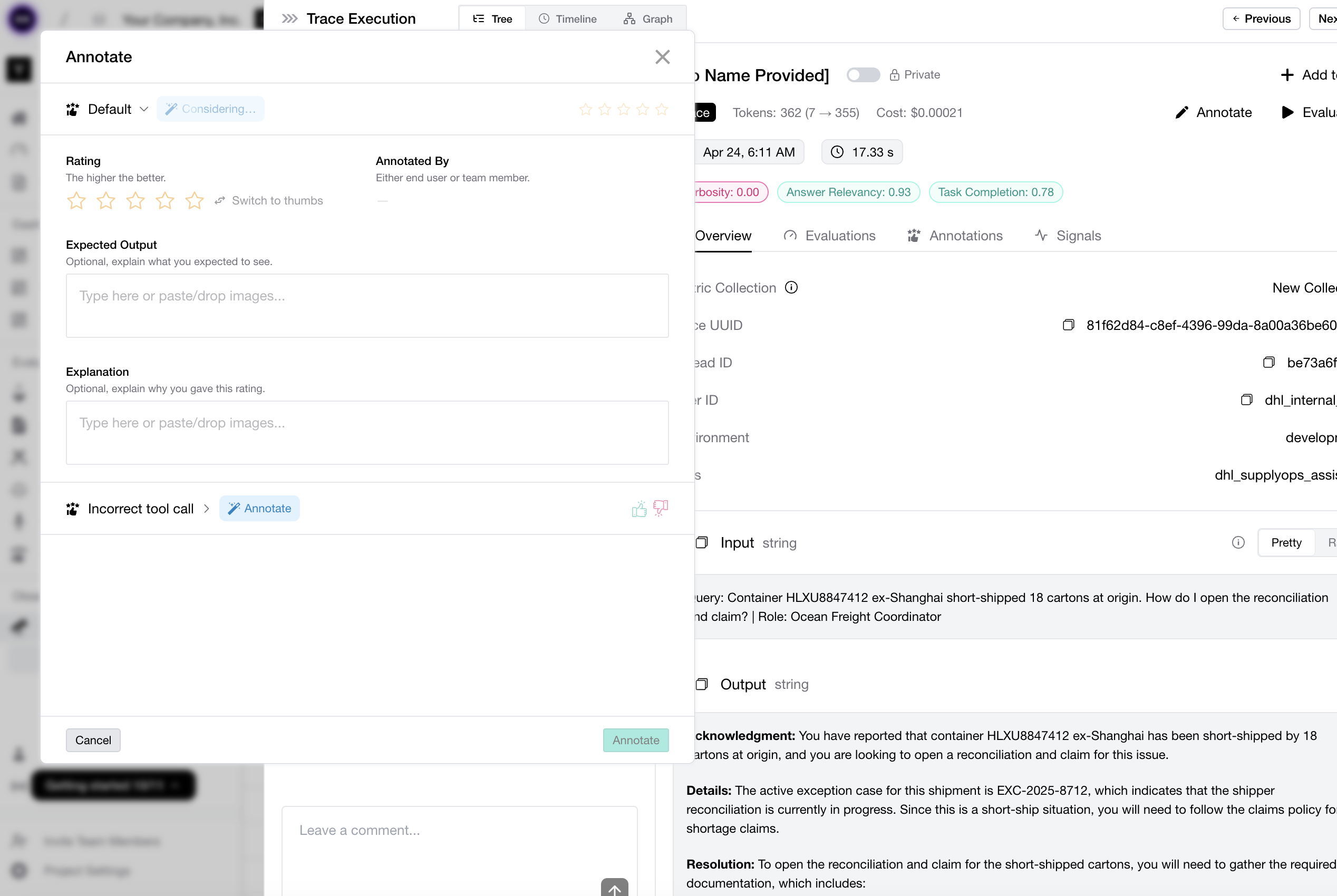

The best annotation work is the annotation work you never had to do. Auto-Annotate now takes the first pass across traces, spans, threads, and test cases, so your team can stop hand-labeling the obvious stuff and save human judgment for the weird, expensive, “why did the model say that?” moments. Multi-turn workflows got more automatic too: threads can become datasets with scenarios, ingestion tasks keep them fresh, and platform models can jump straight into simulations. Oh, and three beta stickers hit the floor this week: Code Execution, Queue Automations, and Dataset Workflows are officially stable. Less clicking. More knowing.

TGIF! Thank god it’s features, here’s what we shipped this week:

Confident AI goes multi-player—and kills the context switch while it’s at it. Comments are now live across traces, spans, threads, and test cases, and when someone @-mentions you, it lands in your Slack with a direct link back to the exact trace. No more “screenshot this span and DM it to me,” no more five-tab scavenger hunts, no more “wait, which trace ID?” The conversation happens exactly where the data lives. That loop works because we also gave Slack & Discord a full glow-up this week—1-click setup, way more signals you can pipe through. And to the voice AI crowd: WebSocket response mode for AI Connections just shipped. We’re coming for you. Custom Dashboards also picked up enough new widgets that the beta sticker is barely hanging on. Oh, and Claude Opus 4.7 is now available everywhere—Arena, Experiments, Evaluations, Platform. Plus Prompt Auto-Refinement on failing test cases, traces, and spans, and image support on annotations. Scroll down, there’s a lot.

TGIF! Thank god it’s features, here’s what we shipped this week:

Did you miss us? We missed you more, especially after last week’s Launch Week! We’re back with a loaded drop: Signals is in public beta—forget pre-defining metrics, Signals automatically surfaces issues, sentiment, and patterns across all incoming traces so you know what actually matters before you decide how to measure it. Confident Agent is live—a relay service that lets you expose internal endpoints to Confident AI without opening them to the public internet, so AI Connections just work with no security approvals or firewall hoops. Executive Reports enter public beta too: define your business KPIs and get daily generated reports against them. And for the org-level view: the Organization Governance Page lets you compare every project side by side on cost, metrics, annotations, and more.

TGIF! Thank god it’s features, here’s what we shipped this week:

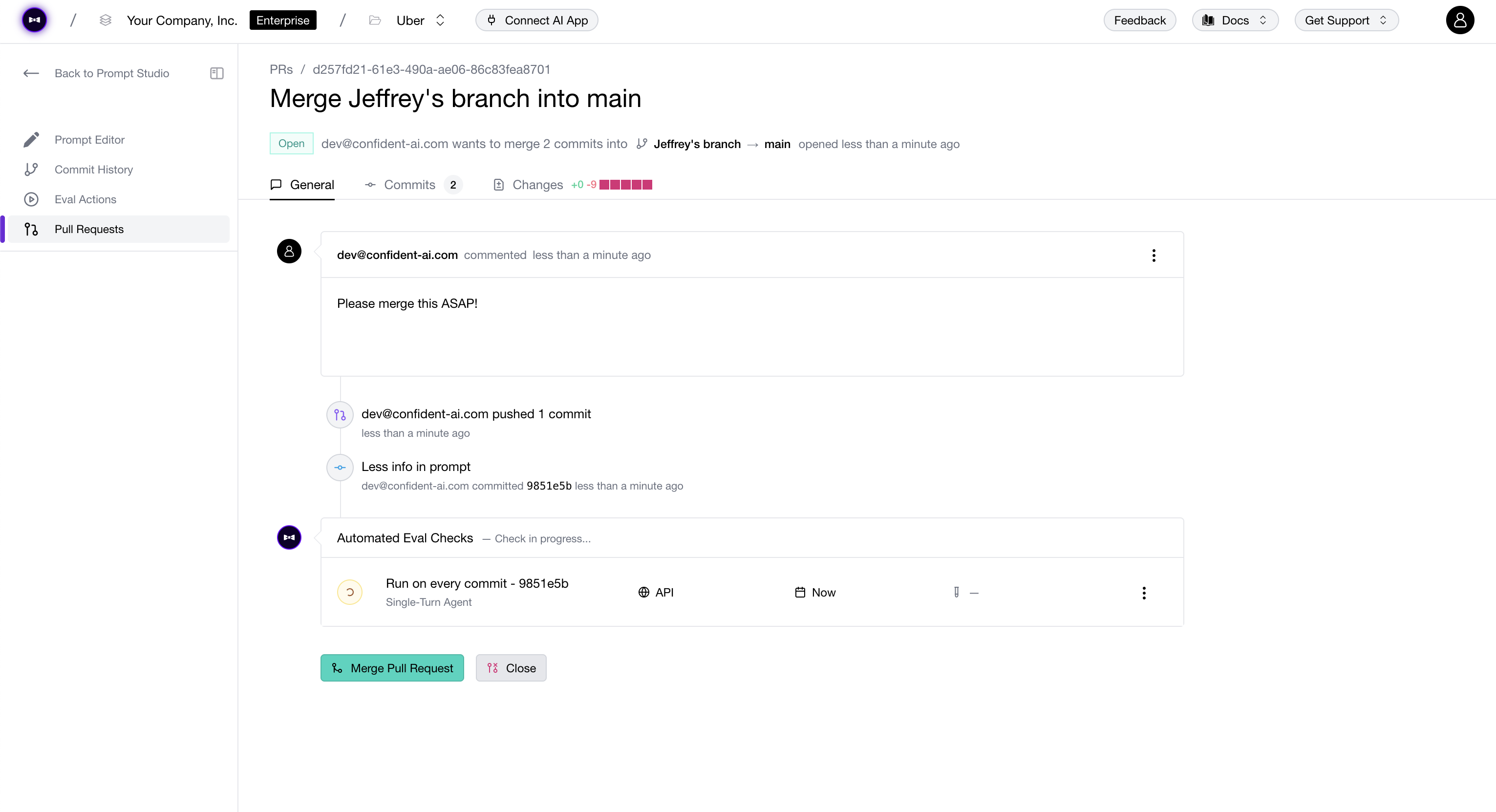

The one you’ve been holding your breath for: Prompt Pull Requests & Approval Workflows are finally live—raise a PR on your prompt branch, let reviewers inspect diffs and eval results before signing off, and get a full audit trail of every change. AI Connections also got a major upgrade: a Postman-style layout, Auth0 and HMAC authorization, and direct trace linking to individual turns in multi-turn test runs. Plus: Thread Categorization with a configurable sample rate, and red teaming progress bars with more progress.

TGIF! Thank god it’s features, here’s what we shipped this week:

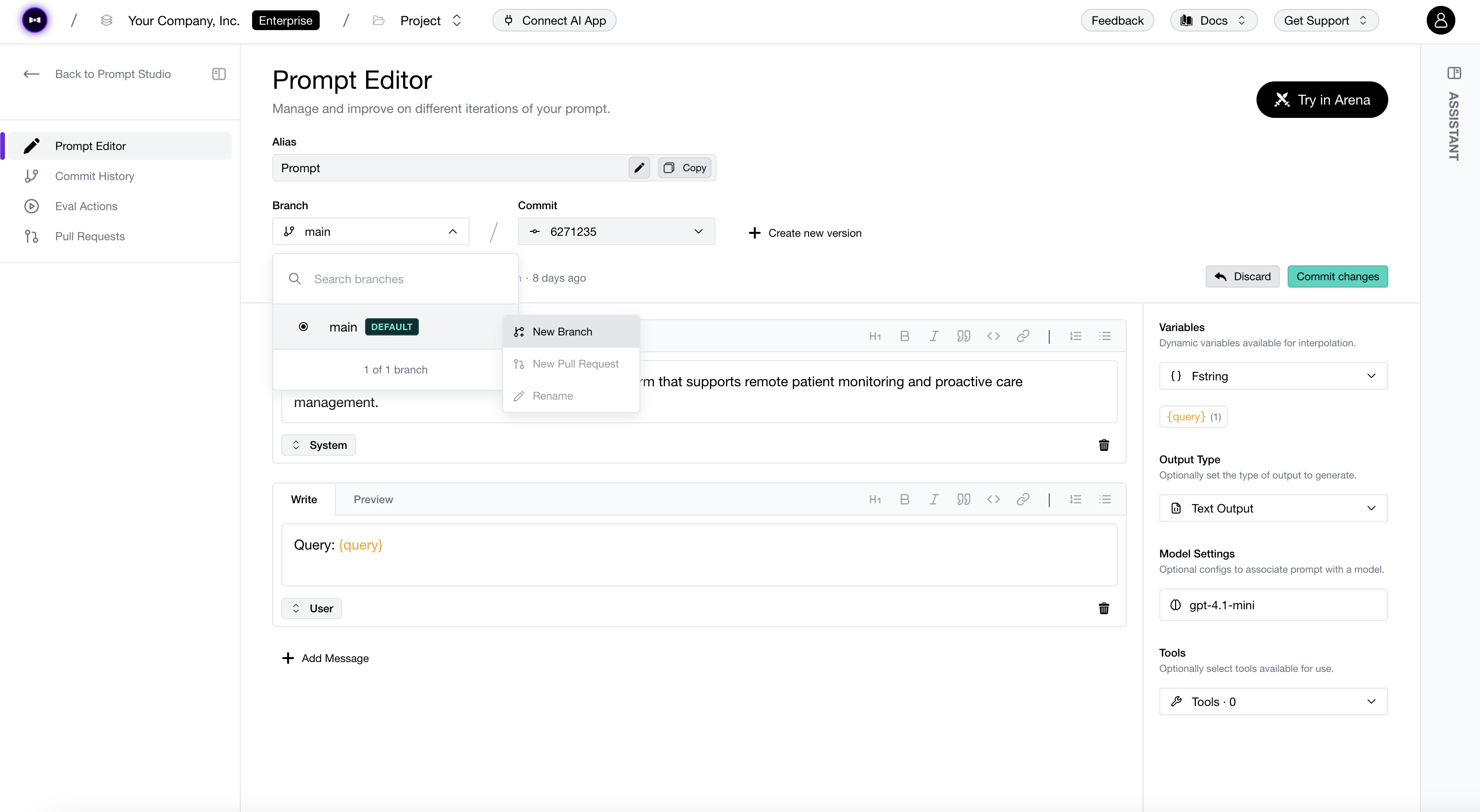

Buckle up—this is a big one. Prompt Branches bring proper version-control workflows to your prompts: branch, iterate, and merge without touching production. Custom Dashboards let you build your own Observatory views from scratch. Plus: OpenRouter and TrueFoundry are now available in Arena and Experiments, OpenInference tracing lands for Python and TypeScript, and enterprise auth gets a serious upgrade with HMAC & Auth0 support.

TGIF! Thank god it’s features, here’s what we shipped this week:

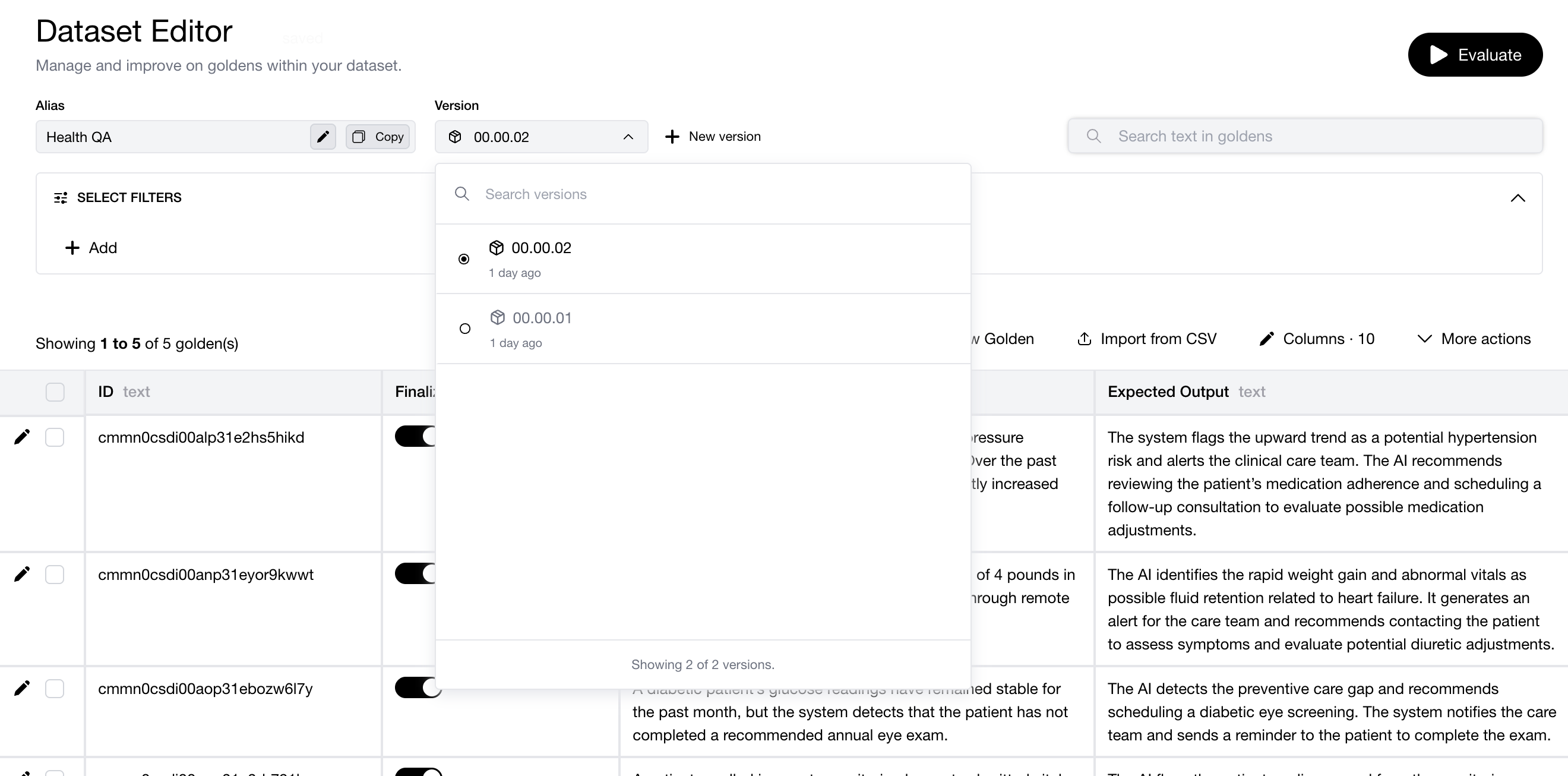

Datasets just got serious with Dataset Versioning—every change tracked, every version referenceable, no more “which dataset did we eval against?” Meanwhile, Replay Trace in Arena lets you re-run any production trace through Arena to compare models side-by-side on real traffic. And for the compliance-minded: Audit Logs are here.